Abstract

Multisensory integration is a salient feature of the brain which enables better and faster responses in comparison to unisensory integration, especially when the unisensory cues are weak. Specialized neurons that receive convergent input from two or more sensory modalities are responsible for such multisensory integration. Solid-state devices that can emulate the response of these multisensory neurons can advance neuromorphic computing and bridge the gap between artificial and natural intelligence. Here, we introduce an artificial visuotactile neuron based on the integration of a photosensitive monolayer MoS2 memtransistor and a triboelectric tactile sensor which minutely captures the three essential features of multisensory integration, namely, super-additive response, inverse effectiveness effect, and temporal congruency. We have also realized a circuit which can encode visuotactile information into digital spiking events, with probability of spiking determined by the strength of the visual and tactile cues. We believe that our comprehensive demonstration of bio-inspired and multisensory visuotactile neuron and spike encoding circuitry will advance the field of neuromorphic computing, which has thus far primarily focused on unisensory intelligence and information processing.

Similar content being viewed by others

Introduction

Relying on visual senses for navigation in complete darkness is not useful; instead, tactile senses can be more effective. While a hard touch can reveal more information about an object or an obstacle owing to large neural responses, a soft touch may be inadequate in evoking neural feedback. However, hard touches can also lead to undesired consequences such as damage to an object, e.g., during locomotion inside a dark room with valuable artwork or injury to the body due to the presence of dangerous entities. In such situations, even a short-lived flash of light can significantly enhance the chance of successful locomotion. This is because visual memory can subsequently influence and aid the tactile responses for navigation. This would not be possible if our visual and tactile cortex were to respond to their respective unimodal cues alone. Integration of cross-modal cues is, therefore, one of the essential features of how the brain functions.

In the brain, each sense functions optimally under different circumstances, but collectively they can enhance the likelihood of detecting and identifying objects and events. Commonly, it is believed that there are dedicated areas in the brain, such as the visual, auditory, somatosensory, gustatory, and olfactory cortices, that process sensory input from one modality, whereas cross-modal integrations occur in higher cortical areas. However, recent findings show that multisensory integration can take place in primary sensory areas via specialized neurons that receive convergent inputs from two or more sensory modalities1. For example, S1 neurons found in the primary somatosensory cortex of trained monkeys respond to visual and auditory stimuli in addition to somatosensory inputs2,3. Similarly, A1 neurons in the auditory cortex respond to both auditory and somatosensory cues4. The advantage of multisensory integration is that the multisensory response is super-additive, i.e., it not only exceeds the individual unisensory responses but also their arithmetic sum.

Another key feature of multisensory integration is that multisensory enhancement is typically inversely related to the strength of the individual cues that are being combined5. This is referred to as the inverse effectiveness effect and makes intuitive sense, as highly salient unimodal stimuli will evoke vigorous responses in corresponding unisensory neurons, which can be easily detected. In contrast, weak cues are comparatively difficult to detect via unisensory neurons; in such cases, multisensory integration can substantially enhance neural activity and positively impact animal behavior by increasing the speed and likelihood of detecting and locating an event6,7,8. In other words, multisensory amplification is the greatest when responses evoked by individual stimuli are the weakest. Finally, multisensory integration demonstrates temporal congruency, i.e., the magnitude of the integrated response is sensitive to the temporal correlation between the responses that are initiated by each sensory input9. In other words, the response is maximal when the peak periods of activity coincide.

Examples of multisensory information processing are abundant in nature. Dolphins, for instance, combine auditory cues derived from echoes with their visual system, enabling them to develop a comprehensive understanding of objects, distances, and shapes present in their environment. Honeybees communicate the whereabouts of food sources to their hive mates through intricate dances called “waggle dances.” These dances incorporate visual cues, such as the angle and duration of the waggle, along with odor cues obtained from the nectar, effectively conveying information about the food source’s distance and direction. Electric fish integrate sensory inputs from their electric sense, vision, and mechanosensation to form a comprehensive perception of their surroundings. While multisensory integration has been widely studied in neuroscience, particularly in the context of cognition and behavior, its benefits are yet to be fully utilized in the fields of robotics, artificial intelligence, and neuromorphic computing.

Note that there are some recent demonstrations of neuromorphic devices that can respond to more than one external stimulus. For example, Liu et al.10 demonstrated a stretchable and photoresponsive nanowire transistor that can perceive both tactile and visual information, You et al.11 demonstrated visuotactile integration using piezoresistors and MoS2 field-effect transistors (FETs), Jiang et al.12 used commercial sensors and spike encoding circuits to encode bimodal motion signals such as acceleration and angular speed and subsequently integrated the two using a dual gated MoS2 FET, Wang et al.13 demonstrated gesture recognition by integrating visual data with somatosensory data from stretchable sensors, Yu et al.14 realized a mechano-optic artificial synapse based on a graphene/MoS2 heterostructure and an integrated triboelectric nanogenerator, and Sun et al.15 reported an artificial reflex arc that senses/processes visual and tactile information using a self-powered optoelectronic perovskite (PSK) artificial synapse and controls artificial muscular actions in response to environmental stimuli. The visual and somatosensory information was also encoded as impulse spikes. Similarly, Chen et al.16 reported a CsPbBr3/TiO2-based floating-gate transistor that can respond to both light and temperature, and Han et al.17 proposed a fingerprint recognition system based on a single-transistor neuron (1T-neuron) that can integrate visual and thermal stimuli. Finally, Yuan et al.18 demonstrated VO2-based artificial neurons that can encode illuminance, temperature, pressure, and curvature signals into spikes, and Liu et al.19 reported an artificial autonomic nervous system to emulate the joint action of sympathetic and parasympathetic nerves on organs to control the contraction and relaxation of artificial pupils and visually simulate normal and abnormal heart rates. However, none of the neuromorphic devices mentioned above embrace the true characteristic features of multisensory integration, i.e., super-additivity, inverse effectiveness effect, and temporal congruency. Furthermore, except for the study by Sun et al.15, none of the above studies demonstrated spike encoding of multisensory information. Here, we introduce a neuromorphic visuotactile device by integrating a triboelectric tactile sensor with a photosensitive monolayer MoS2 memtransistor that can mimic the characteristic features and functionalities of a multisensory neuron (MN). A benchmarking table highlighting the advances made in this work over previous demonstrations on multisensory integration is shown in Supplementary Information 1.

Bio-inspired MN

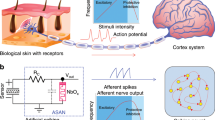

Figure 1a shows a schematic representation of the multisensory integration of visual and tactile information within the biological nervous system, and Fig. 1b shows our bio-inspired visuotactile MN comprising a tactile sensor connected to the gate terminal of a monolayer MoS2 photo-memtransistor as well as the associated spike encoding circuit. The tactile sensor exploits the triboelectric effect to encode touch stimuli into electrical impulses, which are subsequently transcribed into source-to-drain output current spikes (\({I}_{{{{{{\rm{DS}}}}}}}\)) in the MoS2 photo-memtransistor. Similarly, visual stimuli are encoded into threshold voltage shifts by exploiting the photogating effect in monolayer MoS2 photo-memtransistors. The encoding circuit is also built using MoS2 memtransistors. The entire experimental setup is shown in Supplementary Fig. 1. Figure 1c summarizes the three characteristic features of multisensory integration, i.e., super-additive response to cross-modal cues, inverse effective effect, and temporal congruency. In other words, our artificial visuotactile neuron and encoding circuitry can mimic the essential attributes of multisensory integration.

a Schematic representation of multisensory integration of visual and tactile information within the biological nervous system. b A bio-inspired visuotactile multisensory neuron (MN) comprising a triboelectric tactile sensor connected to the gate terminal of a monolayer MoS2 photo-memtransistor along with the associated spike encoding circuit. Electrical impulses generated by the tactile sensor are transcribed into source-to-drain output current spikes (\({I}_{{{{{{\rm{DS}}}}}}}\)) by the MoS2 photo-memtransistor. Similarly, visual stimuli are encoded into threshold voltage shifts by exploiting the photogating effect in monolayer MoS2 photo-memtransistors. The encoding circuit is also built using MoS2 memtransistors to convert analog \({I}_{{{{{{\rm{DS}}}}}}}\) spikes to digital voltage spikes (\({V}_{{{{{{\rm{out}}}}}}}\)). c The three characteristic features of multisensory integration, i.e., super-additive response to cross-modal cues, inverse effective effect, and temporal congruency, are demonstrated by our bio-inspired visuotactile MN.

In this study, we have used monolayer MoS2 grown via metal–organic chemical vapor deposition (MOCVD) on an epitaxial sapphire substrate at 1000 °C. Details on material synthesis, film transfer, and device fabrication can be found in the Methods section and in our previous works20,21,22,23,24,25,26,27. Preliminary material characterization, which includes Raman and photoluminescence spectroscopic analysis of monolayer MoS2, and electrical characterization, which includes the transfer and output characteristics of MoS2 photo-memtransistors measured in the dark, are shown in Supplementary Fig. 2a–d, respectively. All MoS2 devices used in this study have channel length \({L}_{{{{{{\rm{CH}}}}}}}\) = 1 µm and channel width \({W}_{{{{{{\rm{CH}}}}}}}\) = 5 µm, as shown using the plan-view optical micrograph in Supplementary Fig. 3. For the cross-sectional transmission electron microscopy (TEM) image and energy-dispersive X-ray spectroscopy (EDS) demonstrating the elemental distribution, please refer to our recent study in which we employed an identical device stack28. Note that while any photo-memtransistor can be used for this demonstration, the use of monolayer MoS2 is motivated by the fact that beyond visual27 and tactile29 sensations, MoS2-based transistors can be used as chemical sensors30, gas sensors31, temperature sensors32, and acoustic sensors33, which greatly expands the scope for multisensory integration to gustatory, olfactory, thermal, and auditory sensations as well. In addition, MoS2-based devices have enabled various neuromorphic and bio-inspired applications through the integration of sensing, computing, and storage capabilities34,35,36,37,38,39,40. Finally, MoS2 is among the most mature two-dimensional (2D) materials and can be grown at the wafer scale using chemical vapor deposition techniques20; at the same time, aggressively-scaled MoS2-based transistors41 with near Ohmic contacts42 have achieved high performance that meets the IRDS standards for advanced technology nodes43,44,45.

Unisensory tactile and visual response of the MN

First, we study the response of our MN to tactile and visual stimuli alone. The tactile response is obtained using the triboelectric effect, where electrical impulses are generated due to charge transfer when two dissimilar materials come into contact. For our demonstration, the tactile sensor is composed of a stack of commercially available Kapton and aluminum foil separated by an air gap. PDMS stamps with different surface areas were prepared to serve as the touch stimuli (see Supplementary Fig. 4). Note that the magnitude of the electrical impulse generated by our triboelectric tactile sensor is directly proportional to the surface charge, which is strongly dependent on the surface contact area (\(T\)). Since the tactile sensor is connected to the gate terminal of the MoS2 photo-memtransistor, the electrical impulse (\({V}_{{{{{{\rm{spike}}}}}}}\)) generated by the touch gets encoded as source-to-drain current (\({I}_{{{{{{\rm{DS}}}}}}}\)) spikes at the output of the MN. Figure 2a shows the MN response (\({I}_{{{{{{\rm{DS}}}}}}}\) spikes) for different tactile stimuli under dark conditions (\({I}_{{{{{{\rm{LED}}}}}}}\) = 0 A) with the touch inputs having contact surface areas of \(T1\) = 25 mm2, \(T2\) = 49 mm2, \(T3\) = 100 mm2, and \(T4\) = 400 mm2, respectively. A source-to-drain supply voltage (\({V}_{{{{{{\rm{DS}}}}}}}\)) of 1 V was applied across the MoS2 photo-memtransistor. Supplementary Fig. 5a shows the histogram of \({I}_{{{{{{\rm{DS}}}}}}}\) spikes. All touch inputs given to the tactile sensor started from a height of approximately 1 cm with a tapping frequency of ~1 Hz. For any given touch stimulus, there is some inherent variation in the magnitude of \({I}_{{{{{{\rm{DS}}}}}}}\) spikes, which is typical of the triboelectric effect. However, with the increasing size of the touch input, the magnitude of the \({I}_{{{{{{\rm{DS}}}}}}}\) spikes also increases, indicating that more tactile information is required to navigate in the dark. Furthermore, the additive tactile response of the MN was investigated by applying two identical touch inputs simultaneously, with each input having the same area as mentioned above. For better visualization of the tactile simulation, we have included Supplementary Videos 1 and 2 for single and dual touches, respectively. Figure 2b shows the corresponding \({I}_{{{{{{\rm{DS}}}}}}}\) spikes and Supplementary Fig. 5b shows the corresponding \({I}_{{{{{{\rm{DS}}}}}}}\) histograms. Figure 2c and Supplementary Fig. 5c show the bar plot of median spike magnitude (\({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\)) as a function of the strength of the tactile stimuli, i.e., touch contact area for both single and dual touches, in linear and logarithmic scale, respectively. Clearly, the MN’s response is enhanced for double touch inputs (\({TT}\)) compared to a single touch (\(T\)) input, demonstrating the unisensory integration capability of our MN. Figure 2d shows the unisensory integration factor for tactile stimuli (\({{{{{{\rm{UIF}}}}}}}_{{{{{{\rm{T}}}}}}}\)), which we define as the ratio of \({I}_{{{{\rm{DS}}}}-{{{\rm{m}}}}}\) for dual to single-touch responses from the MN, as a function of strength of \(T\). Note that the unisensory integration is super-additive for the weakest tactile stimulus (\(T1\)), as \({{{{{\rm{UIF}}}}}}\) ≈ 4.5, whereas, for the strongest tactile stimulus (\(T4\)), the unisensory integration becomes near additive with \({{{{{\rm{UIF}}}}}}\) ≈ 2.

a Tactile response from MN, i.e., source-to-drain current (\({I}_{{{{{{\rm{DS}}}}}}}\)) spikes obtained from the MoS2 photo-memtransistor under dark condition with increasing strength of touch size, \(T1\) = 25 mm2, \(T2\) = 49 mm2, \(T3\) = 100 mm2, and \(T4\) = 400 mm2, respectively. b Response of the MN to two identical touch inputs applied simultaneously, with each input having the same area as mentioned above. c Bar plot of extracted median values for the source-to-drain current spike magnitude (\({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\)) as a function of the strength of the touch size for both single (\(T\)) and dual (\({TT}\)) touches in linear scale. d Unisensory integration factor for tactile stimuli (\({{{{{{\rm{UIF}}}_{T}}}}}\)), defined as the ratio of \({I}_{{{{\rm{DS}}}}-{{{\rm{m}}}}}\) for dual to single touch responses from the MN, as a function of strength of \(T\). e Pre-illumination dark current and post-illumination persistent photocurrent response of the MN to different visual stimuli (\(V\)) obtained from a light emitting diode (LED) with constant input current (\({I}_{{{{{{\rm{LED}}}}}}}\) = 100 mA) and varying illumination time (\({t}_{{{{{{\rm{LED}}}}}}}\)); \(V1\) = 1 s, \(V2\) = 2 s, and \(V3\) = 10 s, respectively. Both single (\(V\)) and dual (\({VV}\)) illuminations were used to study the unisensory visual integration of the MN. f Bar plot of \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) as a function of the strength of \(V\) for both single and dual illuminations.

To explain the unisensory integration response of our bio-inspired MN to tactile stimuli, we have developed an empirical model. We have used the virtual source (VS) model to describe the MoS2 photo-memtransistor using parameters extracted from the experimental transfer characteristics34,39,46. In the VS model, both the subthreshold and the above threshold characteristics of the MoS2 photo-memtransistor are captured through a single semi-empirical relationship described in Eq. 1.

In Eq. 1, \({R}_{{{{{{\rm{CH}}}}}}}\) is the channel resistance, \({L}_{{{{{{\rm{CH}}}}}}}=1\) \({{{{{\rm{\mu }}}}}}{{{{{\rm{m}}}}}}\) is the channel length, \({W}_{{{{{{\rm{CH}}}}}}}=5\) \({{{{{\rm{\mu }}}}}}{{{{{\rm{m}}}}}}\) is the channel width, \({\mu }_{{{{{{\rm{N}}}}}}}\) is the carrier mobility for electrons in MoS2, \({Q}_{{{{{{\rm{CH}}}}}}}\) is the inversion charge, \(C_{{{\mathrm{G}}}}\) is the gate capacitance, \({V}_{{{{{{\rm{spike}}}}}}}\) is the applied gate voltage, \({V}_{{{{{{\rm{TH}}}}}}}\) is the threshold voltage, \(m\) is the band movement factor, \({k}_{{{{{{\rm{B}}}}}}}\) is the Boltzmann constant, \({T}_{{{{{{\rm{a}}}}}}}\) is the temperature, and \(q\) is the electron charge. Note that \({\mu }_{{{{{{\rm{N}}}}}}}\) can be extracted from the peak transconductance and was found to be ~8 cm2/V-s. Similarly, \({V}_{{{{{{\rm{TH}}}}}}}\) was extracted using the iso-current method at 10 nA and was found to be ~0.85 V, and \(m\) was extracted from the subthreshold slope (\({{{{{\rm{SS}}}}}}=m{k}_{{{{{{\rm{B}}}}}}}T_{{{\rm{a}}}}{{{{\mathrm{ln}}}}}10\)) and was found to be ~4.5. Supplementary Fig. 6 shows the VS model fitting of the experimental transfer characteristics. Using this VS model, \({I}_{{{{{{\rm{DS}}}}}}}\) spikes were mapped to their corresponding \({V}_{{{{{{\rm{spike}}}}}}}\) values. Supplementary Fig. 7a, b shows the histograms for \({V}_{{{{{{\rm{spike}}}}}}}\) corresponding to single and dual touches of various strengths. The distributions for \({V}_{{{{{{\rm{spike}}}}}}}\) were described using Gaussian functions with \({\mu }_{{{{{{\rm{T}}}}}}}\) and \({\sigma }_{{{{{{\rm{T}}}}}}}\) as the mean and standard deviation, respectively. Note that the strength of the tactile stimulus (\(T\)) is captured through \({\mu }_{{{{{{\rm{T}}}}}}}\), whereas the uncertainty associated with any triboelectric response is captured through \({\sigma }_{{{{{{\rm{T}}}}}}}\). Supplementary Fig. 7c, d, respectively, show \({\mu }_{{{{{{\rm{T}}}}}}}\) and \({\sigma }_{{{{{{\rm{T}}}}}}}/{\mu }_{{{{{{\rm{T}}}}}}}\) as a function of \(T\). As expected, \({\mu }_{{{{{{\rm{T}}}}}}}\) increases with increasing strength of \(T\), which we model using the empirical relationship described in Eq. 2a with \({\mu }_{01}\) = 0.75 V, \({T}_{01}\) = 25 mm2, \({\mu }_{02}\) = 5 V, and \({T}_{02}\) = 10000 mm2 as the fitting parameters; \({\sigma }_{{{{{{\rm{T}}}}}}}/{\mu }_{{{{{{\rm{T}}}}}}}\) = 0.25 was assumed constant. The tactile response was subsequently modeled using Eq. 2b.

The super-additive response of the MN to weaker tactile stimuli can be explained from the fact that when \({V}_{{{{{{\rm{spike}}}}}}}\) < \({V}_{{{{{{\rm{TH}}}}}}}\), the magnitude of \({I}_{{{{{{\rm{DS}}}}}}}\) spikes increases exponentially with the strength of \(T\), whereas for \({V}_{{{{{{\rm{spike}}}}}}}\) > \({V}_{{{{{{\rm{TH}}}}}}}\), the MN operates in the linear regime, leading to additive unisensory integration. Figure 2d shows the model-derived \({{{{{\rm{UIF}}}}}}\), which captures the experimental findings.

Next, we evaluate the unisensory visual response of our artificial MN. The visual response is obtained by exposing the MN to illumination pulses of constant amplitude (\({I}_{{{{{{\rm{LED}}}}}}}\) = 100 mA) of varying durations (\({t}_{{{{{{\rm{LED}}}}}}}\)) from a light-emitting diode (LED). During illumination, photocarriers generated in the monolayer MoS2 channel are trapped at the channel/dielectric interface, which leads to a negative shift in the transfer characteristics of the photo-memtransistor that persists even beyond the illumination. Supplementary Fig. 8a shows the transfer characteristics of the MoS2 photo-memtransistor after being exposed to different visual stimuli (\(V\)), i.e., \(V1\) = 1 s, \(V2\) = 2 s, and \(V3\) = 10 s, and Supplementary Fig. 8b shows the extracted \({V}_{{{{{{\rm{TH}}}}}}}\) as a function of \(V.\) The phenomenon of a persistent shift in the \({V}_{{{{{{\rm{TH}}}}}}}\) is known as the photogating effect and is exploited in many neuromorphic devices and vision sensors22,27,47,48,49,50,51,52 (See Supplementary Information 2 for more discussion on the photogating effect). For our demonstration, this emulates the role of visual memory in enhancing the tactile response through multisensory integration. Figure 2e shows the pre-illumination dark current and post-illumination persistent photocurrent response of the MN for different \(V\). As expected, with increasing strength of \(V\), the MN’s response (\({I}_{{{{{{\rm{DS}}}}}}}\)) increases monotonically. Figure 2e also shows the response of the MN when two identical visual stimuli (\({VV}\)) are applied. Figure 2f shows the bar plot for \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) as a function of the strength of \(V\) for both single and dual illuminations. Supplementary Fig. 9 shows the unisensory integration factor for visual stimuli (\({{{{{{\rm{UIF}}}}}}}_{{{{{{\rm{V}}}}}}}\)), which we define as the ratio of the MN’s response to dual and single illuminations, as a function of strength of \(V\). \({{{{{{\rm{UIF}}}}}}}_{{{{{{\rm{V}}}}}}}\) ranges from ~1.5 to 2, which confirms the sub-additive/additive nature of visual cues and highlights the unisensory integration capability of our artificial MN to visual stimuli.

Multisensory visuotactile integration

In this section, we will evaluate the response of the MN to cross-modal cues and compare the results with corresponding unisensory responses. Figure 3a shows the \({I}_{{{{{{\rm{DS}}}}}}}\) spikes obtained from the MN for different combinations of tactile (\(T\)) and visual (\(V\)) cues, and Fig. 3b shows a bar plot of extracted \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) as a function of \(T\) and \(V\) (see Supplementary Fig. 10 for the \({I}_{{{{{{\rm{DS}}}}}}}\) histograms). As expected, the response of the MN monotonically increases with increasing strength of \(T\) for any given \(V\). However, the response of the MN also shows a monotonic decrease with increasing strength of \(V\). This is the so-called inverse effectiveness effect. The physical origin of this effect lies in the screening of the triboelectric gate voltage, obtained from the touch stimuli, by the trapped charges at the interface induced by the visual stimuli. With increasing strength of the visual stimuli, more photo-generated carriers become trapped at the interface, leading to more screening of the triboelectric voltage. Interestingly, this effect resonates remarkably well with its biological counterpart, i.e., a clear visual memory naturally diminishes the sensitivity to tactile stimuli. Our empirical model can capture this effect by introducing a visual-memory-induced screening factor (\({\alpha }_{{{{{{\rm{V}}}}}}}\)) for \({V}_{{{{{{\rm{spike}}}}}}}\). The MN’s response to cross-modal visual and tactile stimuli can be described by Eqs. 3a–3c.

a \({I}_{{{{{{\rm{DS}}}}}}}\) spikes obtained from the MN for different combinations of tactile (\(T\)) and visual (\(V\)) cues. b Bar plots of extracted \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) as a function of \(T\) and \(V\). While the response of the MN increases with increasing strength of \(T\) for any given \(V\), as expected, a monotonic decrease in the response of MN with increasing strength of \(V\) confirms the inverse effectiveness effect. c Results obtained for \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) from an empirical model developed for describing the response of the MN to tactile and visual stimuli also exhibit the inverse effectiveness effect. d Comparison of \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) obtained from the MN in the presence of multimodal (\({VT}\)) and corresponding unimodal cues. Each graph represents the results corresponding to different \(V\) and each group of bars within a graph represents results for different \(T\); each bar within a group represents \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) for \(V\), \(T\), and \({VT}\) from left to right. e Multisensory integration factor (\({{{{{\rm{MIF}}}}}}\)), defined as the ratio of \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) for the multisensory response to the sum of \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) for the individual unisensory responses, as a function of \(T\) and \(V\). Note that \({{{{{\rm{MIF}}}}}}\) >> 1 for all combinations of \(T\) and \(V\), confirming the super-additive nature of multisensory integration by our artificial MN.

Supplementary Fig. 11a, b, respectively, show the experimentally obtained and model-fitted \({V}_{{{{{{\rm{TH}}}}}},{{{{{\rm{V}}}}}}}\) and \({\alpha }_{{{{{{\rm{V}}}}}}}\). Figure 3c shows the bar plot for \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) obtained using the empirical model as a function of \(T\) and \(V\), which clearly exhibits the inverse effectiveness effect. Next, we assess the benefits of multisensory integration over unisensory responses. Figure 3d shows the comparison of \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) obtained from the MN in the presence of multimodal (\({VT}\)) and corresponding unimodal cues. Clearly, the multisensory response exceeds the unisensory responses, as well as their sums, irrespective of the strength of \(T\) and \(V\). In order to evaluate the effectiveness of multisensory integration, we define a quantity called the multisensory integration factor (\({{{{{\rm{MIF}}}}}}\)) as the ratio of the multisensory response (\({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}},{{{{{\rm{VT}}}}}}}\)) to the sum of individual unisensory responses given by Eq. 4.

Figure 3e shows the \({{{{{\rm{MIF}}}}}}\) as a function of various \(T\) and \(V\) stimuli. Note that \({{{{{\rm{MIF}}}}}}\) » 1 for all combinations of \(T\) and \(V\), thus confirming the super-additive nature of multisensory integration by our artificial MN. We found that the \({{{{{\rm{MIF}}}}}}\) can be as high as ~26.4 when the illumination period is the shortest (\(V1\) = 1 s), and the touch area is the smallest (\(T1\) = 25 mm2); conversely, the \({{{{{\rm{MIF}}}}}}\) reduces to ~3.3 when the illumination period is the longest (\(V3\) = 10 s), and the touch area is the largest (\(T4\) = 400 mm2). In other words, \({{{{{\rm{MIF}}}}}}\) decreases monotonically as the strength of the individual cues increases. This makes intuitive sense; offering the greatest multisensory enhancement for the weakest cues can be critical for the survival of the species, whereas diminishing the response when the cues are stronger ensures that the nervous system is not overwhelmed with the multisensory response, highlighting the importance of the inverse effectiveness effect. The inverse effectiveness effect found in the experimentally extracted \({{{{{\rm{MIF}}}}}}\) can also be obtained from the empirical model, as shown in Supplementary Fig. 12.

Next, we compare the effectiveness of multisensory integration against unisensory integration. Figure 4a shows the comparison of \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) obtained through multisensory integration (\({VT}\)) against unisensory integration for different strengths of visual (\({VV}\)) and tactile (\({TT}\)) cues. Clearly, the response due to multisensory integration exceeds the response obtained through unisensory integration, irrespective of the strengths of the individual sensory cues. To assess the effectiveness of multisensory integration over unisensory integration, we define \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{T}}}}}}}\) and \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{V}}}}}}}\) as the ratio of \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) obtained through multisensory integration (\({VT}\)) to unisensory integration, i.e., \({TT}\) and \({VV}\), respectively. Figure 4b, c shows the \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{T}}}}}}}\) and \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{V}}}}}}}\), respectively. Interestingly, both \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{T}}}}}}}\) and \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{V}}}}}}}\) exceed 1 irrespective of the strength of \(T\) and \(V\) stimuli, which reinforces the super-additive nature of multisensory integration. For example, \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{T}}}}}}}\) can be as high as ~7 when \(T\) and \(V\) cues are both weak and decrease to ~1.1 with a stronger tactile input. In other words, \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{T}}}}}}}\) also demonstrates the inverse effectiveness effect with the strength of the tactile stimulus. Similarly, \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{V}}}}}}}\) can be as high as ~66 when both \(T\) and \(V\) cues are weak. While \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{V}}}}}}}\), as expected, increases with increasing strength of \(T\) for any given \(V\), it shows a monotonic decrease with increasing strength of \(V\) for all \(T\). In other words, \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{V}}}}}}}\) demonstrates the inverse effectiveness effect with the strength of the visual stimulus.

a Bar plots of \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) obtained through multisensory integration (\({VT}\)) and unisensory integration for different strengths of visual (\({VV}\)) and tactile (\({TT}\)) cues. Each graph represents the results corresponding to different \(V\) and each group of bars within a graph represents results for different T; from left to right, each bar within a group represents \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) for \({VV}\), \({TT}\), and \({VT}\). Multisensory integration factor for b tactile integration (\({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{T}}}}}}}\)) and c visual integration (\({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{V}}}}}}}\)). Both \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{T}}}}}}}\) and \({{{{{{\rm{MIF}}}}}}}_{{{{{{\rm{V}}}}}}}\) exceed 1 irrespective of the strength of \(T\) and \(V\) stimuli, confirming the advantage of multisensory integration over unisensory integration. d Bar plot of \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) as a function of temporal lag (\(\triangle \tau\)) between \(V\) and \(T\) for different \(T\). A monotonic decrease can be observed for \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) with increasing \(\triangle \tau\), confirming that our artificial MN exhibits temporal congruency. e Long-term temporal response of the MN after exposure to visual stimuli. The monotonic decay in persistent photocurrent can be attributed to the gradual detrapping of trapped photocarriers at the dielectric/MoS2 interface.

Next, we investigate the temporal congruency offered by our MN. As mentioned earlier, biological MNs show the highest response when cross-modal cues appear simultaneously, while the response falls off monotonically with increasing lag between the cues. Supplementary Fig. 13 shows the response of the MN to touch stimuli as a function of lag (\(\triangle \tau\)) between the tactile (\(T\)) and visual (\(V\)) stimuli, and Fig. 4d shows the corresponding bar plots of \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) as a function of \(\triangle \tau\) for different \(T\). A monotonic decrease can be observed for \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) with increasing \(\triangle \tau\), confirming that our artificial MN exhibits temporal congruency. The physical origin of temporal congruency can be attributed to the fact that the persistent photocurrent in MoS2 photo-memtransistors is a direct consequence of photocarrier trapping at the MoS2/dielectric interface; with time, the detrapping process gradually resets the device back to its pre-illumination conductance state. Figure 4e shows the long-term temporal response of the MN after exposure to visual stimuli. Clearly, the post-illumination \({I}_{{{{{{\rm{DS}}}}}}-{{{{{\rm{m}}}}}}}\) decreases monotonically. This can be regarded as a gradual loss of visual memory. Naturally, tactile cues that appear long after the visual cues are expected to evoke significantly reduced responses. The detrapping process leading to a monotonic decrease in \({I}_{{{{{{\rm{DS}}}}}}}\) or, equivalently, a monotonic increase in \({V}_{{{{{{\rm{TH}}}}}}}\) can be described using an exponential decay function with a time constant, \({\tau }_{{{{{{\rm{detrap}}}}}}}\) = 260 s, given by Eq. 5.

By combining Eqs. 2, 3, and Eq. 5, the phenomenon of temporal congruency can be captured using an empirical model.

Note that it is critical to strike a balance between the visual and tactile response for proper functioning of the MoS2 photo-memtransistor-based MN. This can be accomplished by ensuring that \({V}_{{{{{{\rm{TH}}}}}}-{{{{{\rm{V}}}}}}}\) and \({V}_{{{{{{\rm{spike}}}}}},{{{{{\rm{T}}}}}}}\) are of similar magnitudes. To do so, first, the expected strength of the visual (\({I}_{{{{{{\rm{LED}}}}}}},{t}_{{{{{{\rm{LED}}}}}}},{\lambda }_{{{{{{\rm{LED}}}}}}}\)) and tactile (\(T\)) stimuli must be determined based on the application requirements and the operating environment. Next, Eq. 1 through Eq. 5 can be self-consistently and iteratively solved to arrive at the required device design dimensions. This is shown schematically in Supplementary Fig. 14. At the same time, it is also important to understand how various device-related parameters influence multisensory integration. Supplementary Fig. 15a–c, respectively, show the dependence of \({{{{{\rm{MIF}}}}}}\) on \({{{{{\rm{SS}}}}}}\), \({\mu }_{{{{{{\rm{N}}}}}}}\), and \({V}_{{{{{{\rm{TH}}}}}}}\) for the weakest tactile and visual stimuli. Clearly, \({\mu }_{{{{{{\rm{N}}}}}}}\) has the least influence on \({{{{{\rm{MIF}}}}}}\) since it is related to the ON-state performance of the photo-memtransistor, whereas visuotactile responses are generated in the OFF-state of the photo-memtransistor. Therefore, as expected, both \({V}_{{{{{{\rm{TH}}}}}}}\) and \({{{{{\rm{SS}}}}}}\) have a significant impact on \({{{{{\rm{MIF}}}}}}\), with a more positive \({V}_{{{{{{\rm{TH}}}}}}}\) and lower magnitude of \({{{{{\rm{SS}}}}}}\) leading to improved \({{{{{\rm{MIF}}}}}}\). Note that the dependence of \({{{{{\rm{MIF}}}}}}\) on various device related parameters can become a critical design consideration when an ensemble of multisensory neurons is present. Supplementary Fig. 15d shows the transfer characteristics of 100 multisensory neurons and Supplementary Fig. 15e-g, respectively, show the corresponding neuron-to-neuron variation in \({{{{{\rm{SS}}}}}}\), \({V}_{{{{{{\rm{TH}}}}}}}\), and \({\mu }_{{{{{{\rm{N}}}}}}}\). The mean values for \({{{{{\rm{SS}}}}}}\), \({V}_{{{{{{\rm{TH}}}}}}}\), and \({\mu }_{{{{{{\rm{N}}}}}}}\) were found to be 255 mV/decade, 0.42 V, and 10.37 cm2 V−1 s−1, respectively, with corresponding standard deviation values of 26 mV/decade, 0.15 V, and 6.3 cm2 V−1 s−1, respectively. Supplementary Fig. 15h shows the projected neuron-to-neuron variation in \({{{{{\rm{MIF}}}}}}\) based on the model discussed earlier. Note that the inherent variation in the tactile response was already built into the model as described in Eq. 2. The mean value for \({{{{{\rm{MIF}}}}}}\) was found to be ~18.4 with a standard deviation of ~7.3. It is possible to minimize the neuron-to-neuron variation and improve the device performance through further optimization of synthesis, transfer, and cleanliness of the processes associated with device fabrication (see Supplementary Information 3 for more discussion). Finally, the impact of temperature on the performance of multisensory neurons is discussed in Supplementary Fig. 16.

Spike encoding of visuotactile cross-modal cues

The above demonstrations establish the fact that our bio-inspired visuotactile neuron exhibits all characteristic features of multisensory integration. However, unlike the brain, where information is encoded into digital spike trains, the response from our MN is analog. To convert the analog current responses into digital spikes, we use a circuit comprising four monolayer MoS2 photo-memtransistors, \({{{{{\rm{MT}}}}}}1\), \({{{{{\rm{MT}}}}}}2\), \({{{{{\rm{MT}}}}}}3\), and \({{{{{\rm{MT}}}}}}4\), as shown in Fig. 5a (optical image shown in Fig. 1b). Since the gate and drain terminals are shorted for both \({{{{{\rm{MT}}}}}}1\) and \({{{{{\rm{MT}}}}}}3\), these photo-memtransistors are always in saturation and act as depletion loads. Figure 5b shows the voltage, \({V}_{{{{{{\rm{N}}}}}}2}\), measured at node \({{{{{\rm{N}}}}}}2\) as a function of the input voltage, \({V}_{{{{{{\rm{N}}}}}}3}\), applied to node \({{{{{\rm{N}}}}}}3\), i.e., the gate terminal of \({{{{{\rm{MT}}}}}}2\), under dark condition and after exposure to different visual stimuli (\(V\)). For \({V}_{{{{{{\rm{N}}}}}}3}\) = −2 V, \({{{{{\rm{MT}}}}}}2\) is in the OFF-state (open circuit), pulling up \({V}_{{{{{{\rm{N}}}}}}2}\) to \({V}_{{{{{{\rm{DD}}}}}}}\) = 2 V, which is applied to the source terminal of \({{{{{\rm{MT}}}}}}1\), i.e., node \({{{{{\rm{N}}}}}}1\). Similarly, for \({V}_{{{{{{\rm{N}}}}}}3}\) = 2 V, \({{{{{\rm{MT}}}}}}2\) is in the ON-state (short circuit), pulling \({V}_{{{{{{\rm{N}}}}}}2}\) down to \({V}_{{{{{{\rm{GND}}}}}}}\) = 0 V, which is applied to the drain terminal of \({{{{{\rm{MT}}}}}}2\), i.e., node \({{{{{\rm{N}}}}}}4\). This explains why \({V}_{{{{{{\rm{N}}}}}}2}\) switches from 2 V to 0 V as \({V}_{{{{{{\rm{N}}}}}}3}\) is swept from −2 V to 2 V. In other words, \({{{{{\rm{MT}}}}}}1\) and \({{{{{\rm{MT}}}}}}2\) operate as a depletion mode inverter. In the transfer curve shown in Fig. 5b, the value of \({{{{{{\rm{V}}}}}}}_{{{{{{\rm{N}}}}}}3}\) at which \({V}_{{{{{{\rm{N}}}}}}2}\) = \({{{{{{\rm{V}}}}}}}_{{{{{{\rm{DD}}}}}}}/2\) is defined as the inversion threshold (\({{{{{{\rm{V}}}}}}}_{{{{{{\rm{Inv}}}}}}}\)). Note that \({{{{{{\rm{V}}}}}}}_{{{{{{\rm{Inv}}}}}}}\) decreases monotonically with exposure to stronger visual stimuli. This is owing to the photogating effect, which results in a negative shift in \({{{{{{\rm{V}}}}}}}_{{{{{{\rm{TH}}}}}}}\) of \({{{{{\rm{MT}}}}}}2\). Also note that \({{{{{\rm{MT}}}}}}3\) and \({{{{{\rm{MT}}}}}}4\) share a similar configuration and hence their role is to invert \({V}_{{{{{{\rm{N}}}}}}2}\). Figure 5c shows the voltage, \({V}_{{{{{{\rm{N}}}}}}5}\), measured at node \({{{{{\rm{N}}}}}}5\) as a function of \({V}_{{{{{{\rm{N}}}}}}3}\) under the same visual stimulus (\(V\)). Clearly, the circuit comprising \({{{{{\rm{MT}}}}}}1\), \({{{{{\rm{MT}}}}}}2\), \({{{{{\rm{MT}}}}}}3\), and \({{{{{\rm{MT}}}}}}4\) operates as a 2-stage cascaded inverter that can convert an analog input voltage, \({{{{{{\rm{V}}}}}}}_{{{{{{\rm{N}}}}}}3}\), into a digital output, \({V}_{{{{{{\rm{N}}}}}}5}\); at the same time, this circuit offers visual memory, which in turn determines the spiking threshold, \({{{{{{\rm{V}}}}}}}_{{{{{{\rm{TH}}}}}}-{{{{{\rm{spike}}}}}}}\), for the tactile stimulus (\({{{{{\rm{T}}}}}}\)).

a Circuit diagram of the spike encoder comprising four monolayer MoS2 photo-memtransistors (\({{{{{\rm{MT}}}}}}1\)–\({{{{{\rm{MT}}}}}}4\)). The circuit operates as a two-stage cascaded inverter. b Voltage, \({V}_{{{{{{\rm{N}}}}}}2}\), measured at node \({{{{{\rm{N}}}}}}2\), and c voltage, \({V}_{{{{{{\rm{N}}}}}}5}\), measured at node \({{{{{\rm{N}}}}}}5\) as a function of the input voltage, \({V}_{{{{{{\rm{N}}}}}}3}\), applied to node \({{{{{\rm{N}}}}}}3\) under dark condition and after exposure to different visual stimuli (\(V\)). The inversion threshold (\({V}_{{{{{{\rm{Inv}}}}}}}\)) of the first stage inverter and the spiking threshold (\({V}_{{{{{{\rm{TH}}}}}}-{{{{{\rm{spike}}}}}}}\)) of the second stage inverter are functions of the applied visual stimulus. d Spiking output from the encoding circuit, recorded at node \({{{{{\rm{N}}}}}}5\) in response to different tactile (\(T\)) and visual (\(V\)) stimuli. e Bar plot of the spiking probability (\({P}_{{{{{{\rm{spike}}}}}}}\)) for all combinations of \(T\) and \(V\). f Corresponding bar plots of the probability enhancement factor (\({{{{{\rm{PEF}}}}}}\)) obtained from the ratio of post-illumination \({P}_{{{{{{\rm{spike}}}}}}}\) to pre-illumination \({P}_{{{{{{\rm{spike}}}}}}}\). g Comparison of \({P}_{{{{{{\rm{spike}}}}}}}\) obtained for single (\(T\)) and dual (\({TT}\)) touches in the dark for all touch sizes. Inset shows the probability enhancement factor for unisensory tactile integration (\({{{{{{\rm{PEF}}}}}}}_{{{{{{\rm{T}}}}}}}\)).

Figure 5d shows the spiking response from the multisensory neural circuit for different combinations of \(V\) and \(T\). Clearly, the analog current response has now been converted into digital voltage spikes with the probability of spiking (\({P}_{{{{{{\rm{spike}}}}}}}\)) encoding the strengths of \(V\) and \(T\). Figure 5e shows the bar plot of extracted \({P}_{{{{{{\rm{spike}}}}}}}\) for different combinations of \(V\) and \(T\). As expected, \({P}_{{{{{{\rm{spike}}}}}}}\) shows a monotonic increase with increasing strength of \(V\) and \(T\) before saturating at the maximum value of 1. Figure 5f shows the bar plot of the probability enhancement factor (\({{{{{\rm{PEF}}}}}}\)), defined as the ratio of post-illumination \({P}_{{{{{{\rm{spike}}}}}}}\) to pre-illumination \({P}_{{{{{{\rm{spike}}}}}}}\), as a function of \(V\) and \(T\). Note that \({{{{{\rm{PEF}}}}}}\) » 1 for all combinations of \(V\) and \(T\), i.e., the spike encoder preserves the super-additive nature of multisensory integration. Also, \({{{{{\rm{PEF}}}}}}\) increases with increasing strength of \(V\) as \({{{{{{\rm{V}}}}}}}_{{{{{{\rm{TH}}}}}}-{{{{{\rm{spike}}}}}}}\) is reduced and can reach as high as ~24 for the weakest tactile cue, \({{{{{\rm{T}}}}}}1\). Moreover, \({{{{{\rm{PEF}}}}}}\) is the largest for the smallest \(T\) and decreases monotonically with increasing strength of \(T\) for any given \(V\). In other words, \({{{{{\rm{PEF}}}}}}\) also demonstrates the inverse effectiveness effect with \(T\). Finally, Fig. 5g shows the bar plots for \({P}_{{{{{{\rm{spike}}}}}}}\) for single (\(T\)) and dual (\({TT}\)) tactile stimuli under dark conditions; the inset shows the probability enhancement factor for unisensory tactile integration (\({{{{{{\rm{PEF}}}}}}}_{{{{{{\rm{T}}}}}}}\)) (see Supplementary Fig. 17 for spiking response from the multisensory neural circuit for dual touches of different strengths, \({TT}\)). Note that \({{{{{{\rm{PEF}}}}}}}_{{{{{{\rm{T}}}}}}}\) » 1 for all \(T\), confirming that the super-additive nature of unisensory integration is also preserved by the spike encoding circuit.

Also, note that the footprint of the visuotactile circuit is primarily determined by the dimensions of the 2D photo-memtransistor. Recently, we have shown ultra-scaled 2D devices with \({L}_{{{{{{\rm{CH}}}}}}}\) down to 100 nm along with scaled contacts (\({L}_{{{{{{\rm{C}}}}}}}\) down to 20 nm)53. There are several other reports in the literature that confirm the aggressive scalability of 2D devices20,54,55. The footprint of the visuotactile circuit can therefore be made significantly smaller through device dimension scaling. However, the limiting factor is going to be the dimension of the photosensor, which will be determined by the diffraction limit of the light. For the visible spectrum, the dimensions of the photosensors have been stagnant at the micrometer scale or larger. Also note that instead of realizing the readout circuit using 2D photo-memtransistors, integration of 2D sensors with silicon CMOS is a viable alternative, but at the cost of increased processing and fabrication complexity while having no significant performance advantages. In fact, many recent reports highlight the energy and area benefits of using 2D memtransistors for in-sensor and near-sensor processing and storage since these multifunctional devices can be used as logic, memory, and sensing devices25,26,27,34,35,37,51,52,56,57,58,59,60,61,62. The motivation for our current work lies in the integration of these photo-memtransistors with tactile sensors to produce efficient and reliable multisensory integration. Finally, we envision that exploring arrays of multisensory neurons can enable more sophisticated visuotactile information processing.

Discussion

In conclusion, we have realized a visuotactile MN comprising a triboelectric tactile sensor and a monolayer MoS2 photo-memtransistor that offers all three characteristic features of multisensory integration, i.e., super-additive response, inverse effectiveness effect, and temporal congruency. We have also developed a visuotactile spike encoder circuit that converts the analog current response from the MN into digital spikes. We believe that our demonstration of multisensory integration will advance the field of neuromorphic and bio-inspired computing, which has primarily relied on unimodal sensory information processing to date. We also believe that the impact of multisensory integration can be far-reaching with applications in defense, space exploration, and many robotic and AI systems. Finally, the principles of multisensory integration can be expanded beyond visuotactile information processing to other sensory stimuli, including audio, olfactory, thermal, and gustatory stimuli.

Methods

Fabrication of local back-gate islands

To define the back-gate island regions, the substrate (285 nm SiO2 on p++-Si) was spin-coated with a bilayer resist stack consisting of EL6 and A3 resists at 4000 RPM for 45 s. After coating, the resists were baked at 150 °C for 90 s and 180 °C for 90 s, respectively. The bilayer resist was then patterned using e-beam lithography to define the islands and developed by immersing the substrate in a solution of 1:1 MIBK:IPA for 60 s, followed by immersion in 2-propanol (IPA) for 45 s. The back-gate electrode of 20/50 nm Ti/Pt was deposited using e-beam evaporation. The resist was removed using acetone and photoresist stripper (PRS 3000) and cleaned using IPA and de-ionized (DI) water. The atomic layer deposition (ALD) process was then implemented to grow 40 nm Al2O3, 3 nm HfO2, and 7 nm Al2O3 on the entire substrate, including the island regions. To access the individual Pt back-gate electrodes, etch patterns were defined using the bilayer photoresist consisting of LOR 5A and SPR 3012. The bilayer photoresist was then exposed using a Heidelberg MLA 150 Direct Write exposure tool and developed using MF CD26 microposit. The 50 nm dielectric stack was subsequently dry-etched using a BCl3 chemistry at 5 °C for 20 seconds, which was repeated four times to minimize heating in the substrate. Next, the photoresist was removed using the same process mentioned above to give access to the individual Pt electrodes.

Large area monolayer MoS2 film growth

Monolayer MoS2 was grown on an epi-ready 2” c-sapphire substrate. The growth process utilized metal-organic chemical vapor deposition (MOCVD) in a cold-wall horizontal reactor equipped with an inductively heated graphite susceptor, as previously described63. Molybdenum hexacarbonyl (Mo(CO)6) and hydrogen sulfide (H2S) were utilized as precursors for the process. Mo(CO)6 was kept at 10 °C and 950 Torr in a stainless-steel bubbler, from which 0.036 sccm of the metal precursor was delivered for the growth. Simultaneously, 400 sccm of H2S was introduced into the system. The deposition of MoS2 took place at 1000 °C and 50 Torr in an H2 atmosphere, allowing for the growth of monolayers within 18 minutes. Before initiating the growth, the substrate was initially heated to 1000 °C in an H2 environment and held at this temperature for 10 minutes. After the growth process, the substrate was cooled down in an H2S atmosphere to 300 °C to prevent the decomposition of the MoS2 films. Further details can be found in our earlier work on this topic20,36,64.

MoS2 film transfer to local back-gate islands

The fabrication process of the MoS2 FETs involved transferring the MOCVD-grown monolayer MoS2 film from the sapphire growth substrate to the SiO2/p++-Si substrate with local back-gate islands using a PMMA (polymethyl-methacrylate)-assisted wet transfer method. First, the MoS2 film on the sapphire substrate was coated with PMMA through a spin-coating process, followed by baking at 180 °C for 90 s. Subsequently, the corners of the spin-coated film were carefully scratched using a razor blade, and the film was then immersed in a 1 M NaOH solution kept at 90 °C. Capillary action facilitated the entry of NaOH into the substrate/film interface, effectively separating the PMMA/MoS2 film from the sapphire substrate. The separated film was thoroughly rinsed multiple times in a water bath to remove any remaining residues and contaminants. Finally, the film was transferred onto the SiO2/p++-Si substrate with local back-gate islands and baked at 50 °C and 70 °C for 10 min each, ensuring the removal of any moisture and PMMA was later removed using acetone, followed by cleaning with IPA.

Fabrication of monolayer MoS2 memtransistors

To define the channel regions for the MoS2 memtransistors, the substrate underwent a series of steps. Firstly, it was spin-coated with PMMA and then baked at 180 °C for 90 s. The resist was then exposed to an electron beam (e-beam) and developed using 1:1 MIBK:IPA for 60 s, followed by immersion in IPA for 45 s. Subsequently, the monolayer MoS2 film was etched using sulfur hexafluoride (SF6) at 5 °C for 30 s. To remove the e-beam resist, the sample was rinsed in acetone and IPA. For defining the source and drain contacts, the sample was spin-coated with methyl methacrylate (MMA) followed by A3 PMMA. E-beam lithography was then used to pattern the source and drain contacts; development was performed using the same process detailed above. Next, e-beam evaporation was employed to deposit 40 nm of nickel (Ni) and 30 nm of gold (Au) as the contact metal. Finally, a lift-off process was performed to remove excess Ni/Au, leaving only the source/drain patterns. This lift-off was achieved by immersing the sample in acetone for 60 min, followed by IPA for another 10 min. Each island on the substrate contained one MoS2 memtransistor, allowing individual gate control.

Monolithic integration

The spike encoding circuit comprises 4 MoS2 memtransistors. To establish the connections between the nodes, the substrate underwent several steps. Initially, it was spin-coated with MMA and PMMA. Next, e-beam lithography was employed, followed by the development of the same process detailed above. Subsequently, e-beam evaporation was performed to deposit 60 nm of Ni and 30 nm of Au. Finally, the e-beam resist was removed through a lift-off process achieved by immersing the substrate in acetone and IPA for 60 min and 10 min, respectively.

Raman and photoluminescence (PL) spectroscopy

The MoS2 film underwent Raman and PL spectroscopy using a Horiba LabRAM HR Evolution confocal Raman microscope equipped with a 532 nm laser. The laser power was adjusted to 34 mW and filtered down to 1.08 mW with a 3.2% filter. The Raman measurements were taken using an objective magnification of 100× and a numerical aperture of 0.9, while the grating spacing was set at 1800 gr/mm. For PL measurements, the grating spacing used was 300 gr/mm.

Electrical characterization

The fabricated devices were electrically characterized using a Keysight B1500A parameter analyzer in a Lake Shore CRX-VF probe station, with measurements conducted under atmospheric conditions.

Data availability

The datasets generated during and/or analyzed during the current study are available from the corresponding author upon reasonable request.

Code availability

The codes used for plotting the data are available from the corresponding authors upon reasonable request.

References

Lemus, L., Hernández, A., Luna, R., Zainos, A. & Romo, R. Do sensory cortices process more than one sensory modality during perceptual judgments? Neuron 67, 335–348 (2010).

Zhou, Y.-D. & Fuster, J. M. Visuo-tactile cross-modal associations in cortical somatosensory cells. Proc. Natl Acad. Sci. USA 97, 9777–9782 (2000).

Zhou, Y.-D. & Fuster, J. N. M. Somatosensory cell response to an auditory cue in a haptic memory task. Behav. Brain Res. 153, 573–578 (2004).

Lemus, L., Hernández, A. & Romo, R. Neural codes for perceptual discrimination of acoustic flutter in the primate auditory cortex. Proc. Natl Acad. Sci. USA 106, 9471–9476 (2009).

Meredith, M. A. & Stein, B. E. Visual, auditory, and somatosensory convergence on cells in superior colliculus results in multisensory integration. J. Neurophysiol. 56, 640–662 (1986).

Bell, A. H., Meredith, M. A., Van Opstal, A. J. & Munoz, D. P. Crossmodal integration in the primate superior colliculus underlying the preparation and initiation of saccadic eye movements. J. Neurophysiol. 93, 3659–3673 (2005).

Diederich, A. & Colonius, H. Bimodal and trimodal multisensory enhancement: effects of stimulus onset and intensity on reaction time. Percept. Psychophys. 66, 1388–1404 (2004).

Jiang, W., Jiang, H. & Stein, B. E. Two corticotectal areas facilitate multisensory orientation behavior. J. Cogn. Neurosci. 14, 1240–1255 (2002).

Recanzone, G. H. Auditory influences on visual temporal rate perception. J. Neurophysiol. 89, 1078–1093 (2003).

Liu, L. et al. Stretchable neuromorphic transistor that combines multisensing and information processing for epidermal gesture recognition. ACS Nano 16, 2282–2291 (2022).

You, J. et al. Simulating tactile and visual multisensory behaviour in humans based on an MoS2 field effect transistor. Nano Res. 16, 7405–7412 (2023).

Jiang, C. et al. Mammalian-brain-inspired neuromorphic motion-cognition nerve achieves cross-modal perceptual enhancement. Nat. Commun. 14, 1344 (2023).

Wang, M. et al. Gesture recognition using a bioinspired learning architecture that integrates visual data with somatosensory data from stretchable sensors. Nat. Electron. 3, 563–570 (2020).

Yu, J. et al. Bioinspired mechano-photonic artificial synapse based on graphene/MoS2 heterostructure. Sci. Adv. 7, eabd9117 (2021).

Sun, L. et al. An artificial reflex arc that perceives afferent visual and tactile information and controls efferent muscular actions. Research. 2022, 9851843 (2022).

Chen, G. et al. Temperature-controlled multisensory neuromorphic devices for artificial visual dynamic capture enhancement. Nano Res. 16, 7661–7670 (2023).

Han, J.-K., Yun, S.-Y., Yu, J.-M., Jeon, S.-B. & Choi, Y.-K. Artificial multisensory neuron with a single transistor for multimodal perception through hybrid visual and thermal sensing. ACS Appl. Mater. Interfaces 15, 5449–5455 (2023).

Yuan, R. et al. A calibratable sensory neuron based on epitaxial VO2 for spike-based neuromorphic multisensory system. Nat. Commun. 13, 3973 (2022).

Liu, L. et al. An artificial autonomic nervous system that implements heart and pupil as controlled by artificial sympathetic and parasympathetic nerves. Adv. Funct. Mater. 33, 2210119 (2023).

Sebastian, A., Pendurthi, R., Choudhury, T. H., Redwing, J. M. & Das, S. Benchmarking monolayer MoS2 and WS2 field-effect transistors. Nat. Commun. 12, 693 (2021).

Pendurthi, R. et al. Heterogeneous integration of atomically thin semiconductors for non-von Neumann CMOS. Small 18, 2202590 (2022).

Subbulakshmi Radhakrishnan, S. et al. A sparse and spike-timing-based adaptive photoencoder for augmenting machine vision for spiking neural networks. Adv. Mater. 34, 2202535 (2022).

Dodda, A., Trainor, N., Redwing, J. M. & Das, S. All-in-one, bio-inspired, and low-power crypto engines for near-sensor security based on two-dimensional memtransistors. Nat. Commun. 13, 3587 (2022).

Oberoi, A., Dodda, A., Liu, H., Terrones, M. & Das, S. Secure electronics enabled by atomically thin and photosensitive two-dimensional memtransistors. ACS Nano 15, 19815–19827 (2021).

Zheng, Y. et al. Hardware implementation of Bayesian network based on two-dimensional memtransistors. Nat. Commun. 13, 5578 (2022).

Chakrabarti, S. et al. Logic locking of integrated circuits enabled by nanoscale MoS2-based memtransistors. ACS Appl. Nano Mater. 5, 14447–14455 (2022).

Dodda, A. et al. Active pixel sensor matrix based on monolayer MoS2 phototransistor array. Nat. Mater. 21, 1379–1387 (2022).

Schranghamer, T. F. et al. Radiation resilient two-dimensional electronics. ACS Appl. Mater. Interfaces 15, 26946–26959 (2023).

Park, M. et al. MoS2‐based tactile sensor for electronic skin applications. Adv. Mater. 28, 2556–2562 (2016).

Wang, L. et al. Functionalized MoS2 nanosheet‐based field‐effect biosensor for label‐free sensitive detection of cancer marker proteins in solution. Small 10, 1101–1105 (2014).

Shokri, A. & Salami, N. Gas sensor based on MoS2 monolayer. Sens. Actuators B 236, 378–385 (2016).

Daus, A. et al. Fast-response flexible temperature sensors with atomically thin molybdenum disulfide. Nano Lett. 22, 6135–6140 (2022).

Chen, J. et al. An intelligent MXene/MoS2 acoustic sensor with high accuracy for mechano-acoustic recognition. Nano Res. 16, 3180–3187 (2023).

Sebastian, A., Pannone, A., Radhakrishnan, S. S. & Das, S. Gaussian synapses for probabilistic neural networks. Nat. Commun. 10, 1–11 (2019).

Jayachandran, D. et al. A low-power biomimetic collision detector based on an in-memory molybdenum disulfide photodetector. Nat. Electron. 3, 646–655 (2020).

Dodda, A. et al. Stochastic resonance in MoS2 photodetector. Nat. Commun. 11, 4406 (2020).

Arnold, A. J. et al. Mimicking neurotransmitter release in chemical synapses via hysteresis engineering in MoS2 transistors. ACS Nano 11, 3110–3118 (2017).

Nasr, J. R. et al. Low-power and ultra-thin MoS2 photodetectors on glass. ACS Nano 14, 15440–15449 (2020).

Das, S., Dodda, A. & Das, S. A biomimetic 2D transistor for audiomorphic computing. Nat. Commun. 10, 3450 (2019).

Das, S. Two dimensional electrostrictive field effect transistor (2D-EFET): a sub-60mV/decade steep slope device with high ON current. Sci. Rep. 6, 34811 (2016).

English, C. D., Smithe, K. K. H., Xu, R. L. & Pop E. Approaching ballistic transport in monolayer MoS2 transistors with self-aligned 10 nm top gates. In 2016 IEEE International Electron Devices Meeting (IEDM) 5.6.1–5.6.4 https://doi.org/10.1109/IEDM.2016.7838355 (IEEE, 2016).

Shen, P.-C. et al. Ultralow contact resistance between semimetal and monolayer semiconductors. Nature 593, 211–217 (2021).

Nikonov, D. E. & Young, I. A. Benchmarking of beyond-CMOS exploratory devices for logic integrated circuits. IEEE J. Explor. Solid State Comput. Devices Circuits 1, 3–11 (2015).

Sylvia, S. S., Alam, K. & Lake, R. K. Uniform benchmarking of low-voltage van der Waals FETs. IEEE J. Explor. Solid State Comput. Devices Circuits 2, 28–35 (2016).

Lee, C.-S., Cline, B., Sinha, S., Yeric, G. & Wong, H. S. P. 32-bit Processor core at 5-nm technology: analysis of transistor and interconnect impact on VLSI system performance. In 2016 IEEE International Electron Devices Meeting (IEDM) 28.3.1–28.3.4 https://doi.org/10.1109/IEDM.2016.7838498 (2016).

Khakifirooz, A., Nayfeh, O. M. & Antoniadis, D. A simple semiempirical short-channel MOSFET current–voltage model continuous across all regions of operation and employing only physical parameters. IEEE Trans. Electron Devices 56, 1674–1680 (2009).

Xie, D. et al. Photoelectric visual adaptation based on 0D‐CsPbBr3‐quantum‐dots/2D‐MoS2 Mixed‐Dimensional Heterojunction Transistor. Adv. Funct. Mater. 31, 2010655 (2021).

Feng, G. et al. Flexible vertical photogating transistor network with an ultrashort channel for in‐sensor visual nociceptor. Adv. Funct. Mater. 31, 2104327 (2021).

Li, Y. et al. Biopolymer-gated ionotronic junctionless oxide transistor array for spatiotemporal pain-perceptual emulation in nociceptor network. Nanoscale 14, 2316–2326 (2022).

Jiang, J. et al. 2D electric-double-layer phototransistor for photoelectronic and spatiotemporal hybrid neuromorphic integration. Nanoscale 11, 1360–1369 (2019).

Subbulakshmi Radhakrishnan, S., Dodda, A. & Das, S. An all-in-one bioinspired neural network. ACS Nano 16, 20100–20115 (2022).

Dodda, A. et al. Bioinspired and low-power 2D machine vision with adaptive machine learning and forgetting. ACS Nano 16, 20010–20020 (2022).

Schranghamer, T. F. et al. Ultrascaled contacts to monolayer MoS2 field effect transistors. Nano Lett. 23, 3426–3434 (2023).

Smets, Q. et al. Ultra-scaled MOCVD MoS 2 MOSFETs with 42nm contact pitch and 250µA/µm drain current. in 2019 IEEE International Electron Devices Meeting (IEDM), 2019, pp. 23.2.1–23.2. 4.

Das, S. et al. Transistors based on two-dimensional materials for future integrated circuits. Nat. Electron. 4, 786–799 (2021).

Ravichandran, H. et al. A monolithic stochastic computing architecture for energy efficient arithmetic. Adv. Mater. 35, 2206168 (2023).

Jayachandran, D. et al. Insect-inspired, spike-based, in-sensor, and night-time collision detector based on atomically thin and light-sensitive memtransistors. ACS Nano (2022).

Sebastian, A. et al. Two-dimensional materials-based probabilistic synapses and reconfigurable neurons for measuring inference uncertainty using Bayesian neural networks. Nat. Commun. 13, 1–10 (2022).

Radhakrishnan, S. S. et al. A sparse and spike‐timing‐based adaptive photo encoder for augmenting machine vision for spiking neural networks. Adv. Mater. 34, 2202535 (2022).

Dodda, A., Trainor, N., Redwing, J. & Das, S. All-in-one, bio-inspired, and low-power crypto engines for near-sensor security based on two-dimensional memtransistors. Nat. Commun. 13, 1–12 (2022).

Pendurthi, R. et al. Heterogeneous integration of atomically thin semiconductors for non‐von Neumann CMOS. Small 18, 2202590 (2022).

Sebastian, A., Das, S. & Das, S. An annealing accelerator for ising spin systems based on in-memory complementary 2D FETs. Adv. Mater. 34, 2107076 (2022).

Xuan, Y. et al. Multi-scale modeling of gas-phase reactions in metal-organic chemical vapor deposition growth of WSe2. J. Cryst. Growth 527, 125247 (2019).

Dodda, A. & Das, S. Demonstration of stochastic resonance, population coding, and population voting using artificial MoS2 based synapses. ACS Nano 15, 16172–16182 (2021).

Acknowledgements

The work was supported by the Army Research Office (ARO) through Contract Number W911NF1810268 and National Science Foundation (NSF) through CAREER Award under Grant Number ECCS-2042154. The authors also express their gratitude for the 2D material support provided by Dr. Joan M. Redwing and the Pennsylvania State University 2D Crystal Consortium–Materials Innovation Platform (2DCCMIP).

Author information

Authors and Affiliations

Contributions

S.D. conceived the idea and designed the experiments. S.D., M.S., N.S., A.P., and H.R. performed the measurements, analyzed the data, discussed the results, and agreed on their implications. All authors contributed to the preparation of the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Communications thanks Adrian Ionescu, Hongzhou Zhang, and the other anonymous reviewer for their contribution to the peer review of this work. A peer review file is available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Sadaf, M.U.K., Sakib, N.U., Pannone, A. et al. A bio-inspired visuotactile neuron for multisensory integration. Nat Commun 14, 5729 (2023). https://doi.org/10.1038/s41467-023-40686-z

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41467-023-40686-z

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.